Entrusting critical business processes to an AI system has become a standard for the financial industry, with banks expecting algorithms to deliver reliable, unbiased and fair outcomes. But human prejudice, gender and stereotypes often find their way into AI-driven decisions. Avoiding unethical, discriminating decisions in AI systems is a major concern for financial institutes – one we can help with.

Does your human resources department use the aid of artificial intelligence (AI) recruiting systems? And what decisions do these systems influence? AI systems often have a large potential for automating and standardizing high-volume tasks, but ultimately, they rely on real world data and examples with an outcome that is based on human judgement. Therefore, they may subconsciously enforce existing human biases. One such example of human bias is that of racial discrimination in hiring in US labor markets. A meta-analysis of field experiments based on 24 studies since 1989 [1] shows that, even today, white Americans systematically receive 36% more call-backs than black Americans, and 24% more call-backs than Hispanic Americans. If we allow AI systems to learn from such unfair human biases, we can almost be certain that they eventually cause unfair decisions, thereby treading into an ethical no-go zone.

Ethical AI is of paramount importance in shaping the future of banking. Hence, we take the opportunity to describe how Avaloq’s approach, strengthened by the robust technical foundation of NEC resulting from many years of research, will not only meet, but also exceed the highest regulatory requirements set by the world’s strictest regulatory bodies.

First, we explore how the evolution of AI models from very simple rule-based systems to complex machine learning (ML) algorithms has changed post-production monitoring. We show how the rise in AI sophistication has simultaneously made it more difficult to monitor such systems for unfair bias and discrimination. Second, we discuss the regulations set forth in the AI space by major regulatory bodies, and how the progressiveness of the European Commission creates an opportunity for an “ethical AI” industry in the EU. Third, we demonstrate how Avaloq’s approach ensures a high standard of ethics in AI that goes well beyond the minimum requirements, ensuring a smooth end-to-end data science user journey for our clients.

Evolution

of AI models

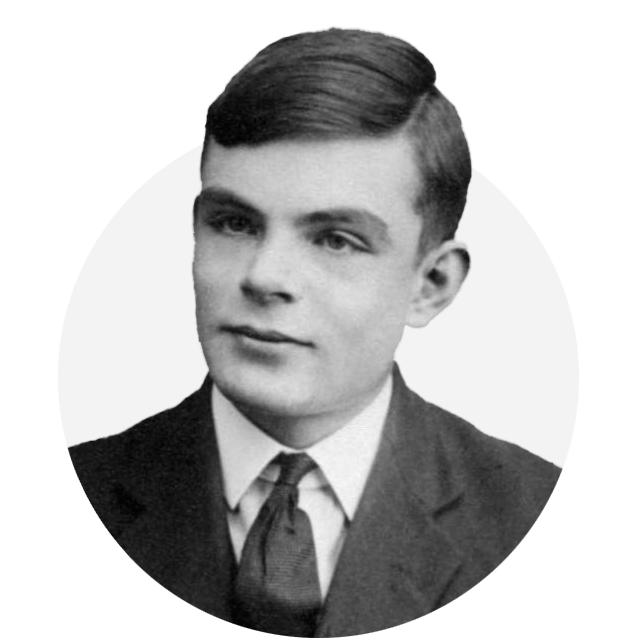

Alan Turing – Would modern chatbots stand the Turing Test he envisioned in 1950?

“I propose to consider the question, ‘Can machines think?’”, wrote Alan Turing in his 1950 paper “Computing Machinery and Intelligence” while working at the university of Manchester [2]. He devised his famous Turing Test, reasoning that if a machine could carry on a conversation that was indistinguishable from a conversation with a human being, then it was reasonable to categorize the ability as AI. While widely criticized, the Turing test proved to be highly important in the philosophy of AI, as it was one of the earliest attempts at judging machine intelligence on exhibiting human-like behavior.

Today, we talk a lot about AI systems. End-user interfaces such as Amazon’s Alexa and Apple’s Siri have entered our daily life. Would they pass the Turing Test? Have you ever conversed with a chatbot, only to discover afterwards that it wasn’t a human? As simple as the setup of the Turing Test may be, the results aren’t always so. It remains in the hands of the end-users to decide whether an AI model truly behaves like a human.

Fast-forwarding 70 years, we have seen that AI has come a long way. Periods of ups (1960-1975 and 1980-1990) paved way for applications that were heuristic in nature (reasoning as search, natural language processing using semantic networks) and exploited knowledge-based systems [3], alternated by periods of negative sentiment due to the limited computing power and storage. Over time, simple rule-based algorithms that were easy to dissect and monitor, gave way to complex algorithms involving machine learning. The overview below (Figure 1) shows how rule-based systems enable direct control over the rules, and an initial shorter time to implementation, while machine-learning based systems tend to be more robust and easier to maintain.

Ethical artificial intelligence –

avoid human bias in AI

The above graphic is a typical comparison of rule-based versus machine learning systems. While true in the context of development, the elephant in the room, however, is the post-production monitoring. The latter algorithms are usually more accurate than the former. However, they are also opaquer and therefore, much more difficult to monitor for discrimination, especially as they have increased in complexity and sophistication over the decades. Even big technology companies, often regarded as pioneers in the field of AI, are not immune to scrutiny on this front as evidenced by an allegation in 2019 about how Apple’s “sexist” credit risk model allegedly offered different credit limits for men and women due to gender-based discrimination [4]. While no gender discrimination was found during the investigation [5], this event highlights the importance of proper monitoring of AI systems in order to preserve a high level of ethics and minimize reputational risk. A McKinsey article highlights the importance of reputation preservation amidst the rise of double-edged AI. The article pinpoints risks along the entire life of an AI solution, including potential ethical issues and biased or discriminatory outcomes prominently in their model [6].

AI ethics

and regulation

Many organizations worldwide, both corporate and societal, are involved in establishing best practices on the use of AI. One of the major regulators, the European Commission (EC), is one of the most progressive bodies in the world and emphasizes that trust is a must-have: “On Artificial Intelligence, trust is a must, not a nice to have. With these landmark rules, the EU is spearheading the development of new global norms to make sure AI can be trusted.” [7] According to the EC, AI should be lawful, ethical, and robust [8]. A set of seven key requirements that AI systems should meet to be deemed trustworthy have been put forward by the EC.

Avaloq’s approach to ethical AI

In this section, we point out the measures we take to ensure an ethical approach to our AI-related endeavors within Avaloq Insight, our Data Analytics platform. All our predictive models benefit from an auditable end-to-end data governance process including data lineage tracking (i.e., how are your datasets created and what sources are they based on?), automated logging of predictions (i.e., storing all predictions made by AI systems in an interpretable format), and row-level security (i.e., a security concept which defines for every row of data who has access to it). With these features of our AI solution we meet criteria 2, 3, 4, 6 and 7 set forth by the EC (see Figures 1 and 2).

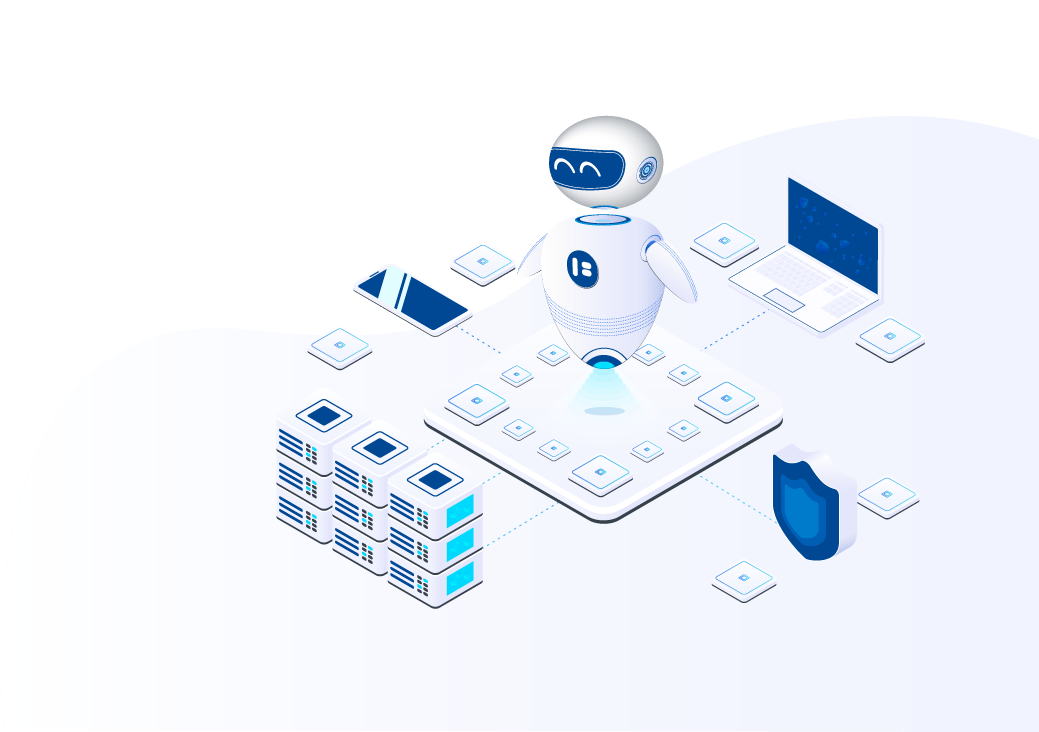

To guarantee human agency and oversight (criterion 1) and diversity, nondiscrimination and fairness (criterion 5), we have devised a sophisticated monitoring concept (Figure 4). We categorize AI models along the question of whether they could lead to a potentially negative outcome for the end-user. Those that have no possibility of resulting in a negative outcome for the end-user (group A), or those that do (group B).

Key metrics

Fairness refers to ensuring that the model does not exhibit discriminatory bias against certain gender, age, ethnicity, or marital status groups, thereby satisfying criterion 5 (diversity, non-discrimination, and fairness) proposed by the EC – see previous section. This is always monitored within the context of an unfavorable outcome that is pre-determined specifically for each group B model. For example, for credit risk scoring, the unfavorable outcome is the non-approval of a loan. For a smart recruiting system, a non-hire is the unfavorable outcome. We aim to have a maximum 15% of deviation of unfavorable outcomes across gender, age, ethnicity, and marital status groups. As an example, the percentage of non-hire recommendations for those over the age of

50 for a smart recruiter model may not be more than 15% higher than the percentage of non-hire recommendations for those

in their twenties.

Quality refers to the predictive accuracy of the model and is benchmarked to the expected accuracy that is determined during the model development phase. If the current quality is more than 15% worse than the expected quality, there may be a drift (i.e., change) in the underlying data, and an alert is triggered. In this case, the model may need to be re-designed.

Collaboration

with NEC

NEC labs have been researching the explainability, robustness and continuous monitoring of AI. Originally, this research attempted to put bounds of trust on AI results – how to detect cases where it is better to have a human in the loop, while trusting the AI on most other cases. The NEC research found mathematical measures of ‘similarity’ between a new case and past ones that rely on the internals of the AI model, rather than on simple statistical tests. Simply put, if a new case is sufficiently ‘similar’ to past cases, we have more trust in the model. The data scientists at NEC then developed this into a novel explainability tool – if the current case is, according to the AI, very similar to some past examples, that is their explanation for the decision. Furthermore, when the AI is still found to be wrong, this similarity measure can be used to retrain and re-measure the results. In certain cases, for example, where the end client falls under a certain age range and locality tend to get low credit scores for no justifiable reason, the model can be retrained so as to correct the AI on those cases, without affecting the overall AI behavior. This can save a lot of revalidation time and allow for almost ‘on the fly’ adaptations to handle edge cases.

The same research also led to new monitoring tools. A well-known issue of AI models is their deterioration over time. Bits and Bytes do not rot like fresh food, but subtle changes in the input case population can make the model performance degrade. A recent example is the effect of COVID-19 on fraud models used in the financial industry to assess new clients – as many people had to stop their education or lost their jobs and had to return to living with their parents to support them or to save rent costs, the fraud models created many more alerts. A financial institute needs tools that can monitor the AI model inputs and outputs and alert that ‘something has changed’ without expert humans having QA the AI results. Using the same ‘similarity’ methods developed originally for explainability and trust, it is now possible to track the ‘case distribution’ and alert for changes that may require human inspection of the recent results.

The tight collaboration of the Avaloq data science team with the NEC Labs allows to leverage the expertise for automatically monitoring AI systems by applying bias and population shift tests directly on encrypted data.

Conclusion

At Avaloq, we care about data privacy and fairness. We employ sophisticated monitoring tools to make sure your models are not only accurate, but also fair and ethical. We see AI regulation as a positive trend and choose to fully embrace it to make your data science user journey smoother from end-to-end.

Do you have any questions about this report or the insights we’ve shared? We welcome you to get in touch with one of our experts.

DISCOVER MORE

Evolution of AI Models

DISCOVER MORE

AI Ethics Regulation

DISCOVER MORE

Avaloq's Approach

DISCOVER MORE

Conclusion

Rule-based programming

Machine learning

The developer has full control

on the rules

Less direct control on

the rules

Few examples are enough to start

building the rules

Large (labelled) datasets are usually

required to train a model

A first basic solution is faster

to implement

A full-fledged solution

is faster

It may be complex to set rules covering

also details or minor effects

The algorithm learns how to extract the most

information available in the data

FLEXIBILITY / DEBUGGING

DATA AVAILABILITY

DEVELOPMENT TIME

ROBUSTNESS

(EDGE CASES / NEW PATTERNS)

Gery Zollinger

Group Product Manager Insight

Avaloq

gery.zollinger@avaloq.com

Contact and authors

EXTENSION TO SIMILAR PROBLEMS

Usually rules have to be rebuilt almost

from scratch

For a similar problem on a different use case good

results may be obtained by changing the training dataset

MAINTENANCE

The rules have to be kept up-to-date, usually

with manual intervention

The model can be kept up-to-date feeding

new data as training

KNOWLEDGE

High dependence on domain-specific knowledge

for definition of rules (resources, bias)

Domain-specific knowledge is central only

at validation phase

Read More

Dr. Shardul Paricharak

Senior Data Scientist

Avaloq

shardul.paricharak@avaloq.com

Tsvi Lev

Managing Director

Israeli Research Center, NEC

tsvi.lev@necam.com

Dr. Yaacov Hoch

Senior Director of Innovation

NEC

Yaacov.Hoch@necam.com

GET MORE INSIGHTS

Figure 1. Development pros and cons of rule-based systems versus machine learning systems

1.

Human agency

and oversight

AI systems should

empower human

beings, allowing them

to make informed

decisions, whilst

maintaining adequate

oversight

Negative outcome

possible for end-user?

Group A models. All non-identifying attributes used. Fairness is ensured automatically, as no unfavorable outcome is possible.

Group B models. Treated

as high-risk models.

• Quality and drift

monitored continuously

• Sensitive attributes removed

• Attributes with high correlation to

sensitive attributes removed

• Fairness, quality and drift

monitored continuously

Recommender system

to suggest trade ideas within an approved investment context

• Credit risk-scoring

• Compliance risk scoring

• Smart recruiting system

GET MORE INSIGHTS

Would modern chatbots

stand the Turing Test?

In 1950, Alan Turing posed the question which still builds the core of any artificial intelligence technology: “Can machines think?” And to answer his question, he envisioned the now famous Touring Test. The setting is simple. A human judge gets the chance to ask questions to an unknown party in another room. The machine, or the software as we would say nowadays, passes the test when it successfully tricks the judge into believing it is human. The revolutionary aspect of this approach is, that Turing did not try to define and measure ‘thinking’, but set it equal with the ability to imitate a human being.

And now? Would Alexa and Siri pass the Turing Test from the 50s?

AI systems should be resilient and secure

2.

Technical

robustness

and safety

Besides ensuring full respect for privacy and data protection, adequate data governance mechanisms, must also be ensured, considering the quality and integrity of the data, and ensuring legitimized access to the data

3.

Privacy and data

governance

The data, system and AI business models should be transparent

4.

Transparency

Unfair bias and discrimination must be avoided

5.

Diversity, nondiscrimination,

and fairness

AI systems should benefit all human beings, including future generations. They must hence be sustainable and environmentally friendly

6.

Societal and

environmental

well-being

AI systems should be accountable and auditable

7.

Accountability

3.

Privacy and data

governance

4.

Transparency

7.

Accountability

Data lineage tracking

6.

Societal and

environmental

well-being

Prediction logging

2.

Technical

robustness

and safety

Low-level security

1.

Human agency

and oversight

5.

Diversity, nondiscrimination,

and fairness

Monitoring concept

Avaloq Insight features

Hover over each element to read more

Figure 4. Avaloq’s ethical AI Approach

Figure 2. The European Commissions’ seven key requirements for AI systems

Figure 3. All Avaloq Insight models benefit from many features which directly meet five of the seven key criteria proposed by the EC for AI systems

The progressiveness of the EC with respect to the high standard of regulation in the space of AI creates an opportunity for an “ethical AI” industry in Europe. This ultimately benefits the end-user.

Click the tabs to see how the Avaloq Insight features

cover the seven EC key criteria

Entrusting critical business processes to an AI system has become a standard for the financial industry, with banks expecting algorithms to deliver reliable, unbiased and fair outcomes. But human prejudice, gender inequalities and stereotypes often find their way into AI-driven decisions. Avoiding unethical, discriminating decisions in AI systems is a major concern for financial institutes – one we can help with.

Ethical artificial intelligence – avoid human bias in AI

Ethical artificial intelligence – avoid human bias in AI

Alan Turing – Would modern chatbots stand the Turing Test he envisioned in 1950?

Ethical artificial intelligence – avoid human bias in AI

The progressiveness of the EC with respect to the high standard of regulation in the space of AI creates an opportunity for an “ethical AI” industry in Europe. This ultimately benefits the end-user.

Rule-based programming

Machine

learning

EXTENSION TO

SIMILAR PROBLEMS

Usually rules have to be rebuilt almost

from scratch

For a similar problem on a different use case good results may be obtained by changing the training dataset

50

3.

Privacy and data governance

Besides ensuring full respect for privacy and data protection, adequate data governance mechanisms, must also be ensured, considering the quality and integrity of the data, and ensuring legitimized access to the data

5.

Diversity, nondiscrimination,

and fairness

Unfair bias and discrimination must be avoided

1.

Human agency and oversight

AI systems should empower human beings, allowing them to make informed decisions, whilst maintaining adequate oversight

2.

Technical robustness and safety

AI systems should be resilient and secure

4.

Transparency

The data, system and AI business models should be transparent

6.

Societal and environmental

well-being

AI systems should benefit all human beings, including future generations. They must hence be sustainable and environmentally friendly

7.

Accountability

AI systems should be accountable and auditable

Figure 1. Development pros and cons of rule-based systems versus machine learning systems

Rule-based programming

The developer has full control on the rules

Less direct control on

the rules

Machine

learning

FLEXIBILITY / DEBUGGING

AI ethics

and regulation

In this section, we point out the measures we take to ensure an ethical approach to our AI-related endeavors within Avaloq Insight, our Data Analytics platform. All our predictive models benefit from an auditable end-to-end data governance process including data lineage tracking (i.e., how are your datasets created and what sources are they based on?), automated logging of predictions (i.e., storing all predictions made by AI systems in an interpretable format), and row-level security (i.e., a security concept which defines for every row of data who has access to it). With these features of our AI solution we meet criteria 2, 3, 4, 6 and 7 set forth by the EC (see Figures 1 and 2).

Alan Turing – Would modern chatbots stand the Turing Test he envisioned in 1950?

Click the tabs to see how the Avaloq Insight features

cover the seven EC key criteria

Monitoring concept

Low-level security

Prediction logging

Data lineage tracking

Monitoring concept

Low-level security

Prediction logging

Data lineage tracking

1.

Human agency and oversight

2.

Technical robustness and safety

3.

Privacy and data governance

4.

Transparency

5.

Diversity, nondiscrimination,

and fairness

6.

Societal and environmental

well-being

7.

Accountability

Figure 3. All Avaloq Insight models benefit from many features which directly meet five of the seven key criteria proposed by the EC for AI systems

To guarantee human agency and oversight (criterion 1) and diversity, nondiscrimination and fairness (criterion 5), we have devised a sophisticated monitoring concept (Figure 4). We categorize AI models along the question of whether they could lead to a potentially negative outcome for the end-user. Those that have no possibility of resulting in a negative outcome for the end-user (group A), or those that do (group B).

Group A models

An example for a non-sensitive model could be a recommender system to suggest trade ideas, within an investment context, pre-approved by the end client. For all models belonging to group A, all non-identifying attributes available are used in the modeling process. As they have no way of creating an unfavorable outcome for the end-user, fairness is ensured automatically.

Group B models

All models in group B are treated with the high level of ethical requirements proposed for “high-risk AI models” even if they are deemed to be of lower risk [9]. In addition, very high risk models, such as those involving biometric identification, will not be used at all. This is one of the ways Avaloq exceeds the minimum requirements set by one of the most progressive regulatory bodies in the world. Group B models are subjected to a multitude of measures (Figure 2). Sensitive attributes are identified and omitted from the list of features, and attributes with a high correlation (0.85 or higher) to the sensitive attributes are removed as well. The remaining attributes are classified as a “legally selectable universe of attributes”. Finally, a post-deployment monitoring system is put in place, satisfying criterion 1 (human agency and oversight) proposed by the EC – see previous section. This system ensures the continuous monitoring of several key metrics (e.g., a maximum deviation of 15% in comparison to the expected value is tolerated). These key metrics are fairness, quality and drift and deserve some further explanation.

Figure 4. Avaloq’s ethical AI Approach

Avaloq’s approach

to ethical AI

Alan Turing – Would modern chatbots stand the Turing Test he envisioned in 1950?

Collaboration

with NEC

Conclusion

Rule-based programming

Few examples are enough to start

building the rules

Large (labelled) datasets are usually

required to train a model

Machine

learning

DATA AVAILABILITY

Rule-based programming

A first basic solution is faster to implement

A full-fledged solution

is faster

Machine

learning

DEVELOPMENT TIME

Rule-based programming

It may be complex to set rules covering

also details or minor effects

The algorithm learns how to extract the most information available in the data

Machine

learning

ROBUSTNESS

(EDGE CASES / NEW PATTERNS)

Rule-based programming

The rules have to be kept up-to-date, usually with manual intervention

The model can be kept up-to-date feeding new data as training

Machine

learning

MAINTENANCE

Rule-based programming

High dependence

on domain-specific knowledge for definition of rules (resources, bias)

Domain-specific knowledge is central only at validation phase

Machine

learning

KNOWLEDGE

GET MORE INSIGHTS

Categorization

Measures

Measures

Categorization

Group A models

An example for a non-sensitive model could be a recommender system to suggest trade ideas, within an investment context,

pre-approved by the end client. For all models belonging to

group A, all non-identifying attributes available are used in the modeling process. As they have no way of creating an unfavorable

outcome for the end-user, fairness is ensured automatically.

Group B model

All models in group B are treated with the high level of ethical requirements proposed for “high-risk AI models” even if they are deemed to be of lower risk [9]. In addition, very high risk models,

such as those involving biometric identification, will not be used at all.

This is one of the ways Avaloq exceeds the minimum requirements set by one of the most progressive regulatory bodies in the world. Group B models are subjected to a multitude of measures (Figure 2). Sensitive attributes are identified and omitted from the list of features, and attributes with a high correlation (0.85 or higher) to the sensitive attributes are removed as well. The remaining attributes are classified as a “legally selectable universe of attributes”. Finally, a post-deployment monitoring system is put in place, satisfying criterion 1 (human agency and oversight) proposed by the EC – see previous section. This system ensures the continuous monitoring of several key metrics (e.g., a maximum deviation of 15% in comparison to the expected value is tolerated). These key metrics are fairness, quality and drift and deserve some further explanation.

Sign up for the newsletter

Enjoyed this report?

Subscribe to receive thought leadership and industry insights from us regularly.